Internal AI Case Study

NextraData

An AI software engineer merged 69 PRs, resolved 42 issues, and touched 278,000+ lines of code (net 59K removed) in month one. Output equivalent to a full-time senior developer, with half the month spent on setup and training.

Visit SiteThese results are from month one, which included two weeks of agent training and codebase preparation to support AI contributions.

PRs Merged

69

~16 per week across multiple apps

Issues Resolved

42

40+ created and triaged

Net Lines Removed

59K

110K added, 169K removed

Team PR Share

57%

Majority of all merged PRs

The Engagement

NextraData brought on an Internal AI software engineer to accelerate development across their front-end applications. Month one focused on training the agent, modernizing foundational infrastructure, and building the workflows needed for the AI to contribute independently.

Month One Results

Even with two weeks dedicated to setup and training, the AI agent delivered output equivalent to a full-time senior developer. It authored 57% of all merged PRs for the month and left the codebase 59,000 lines leaner.

The agent can now verify its own changes visually before every PR, reducing review burden on the human team. Month two shifts focus to net-new product features and fully autonomous task execution.

What the AI Delivered in 31 Days

From testing infrastructure to codebase consolidation, the AI agent tackled foundational work that set the stage for sustained velocity.

Pull Requests at Scale

Authored 69 merged pull requests in 31 days across multiple front-end applications. Averaged 16 PRs per week while also reviewing 15 PRs from other team members.

Testing Overhaul

Modernized the entire front-end testing stack, achieving 100% component coverage. Replaced outdated tooling with a modern framework in under three weeks.

Infrastructure Modernization

Restructured the repository to eliminate the team's #1 productivity bottleneck: a manual publish step required on every shared code change. Changes now take effect immediately.

Codebase Consolidation

Identified and consolidated widespread duplicate code into clean, reusable abstractions. Removed thousands of lines of redundancy while preserving all existing behavior.

Self-QA Workflow

Built a workflow to visually verify its own changes before submitting each PR. Captures screenshots and videos automatically, reducing review burden on the human team.

Issue Management

Created and triaged 40+ GitHub issues, resolved 42, and added observability instrumentation across the platform for better production monitoring.

Verified on GitHub

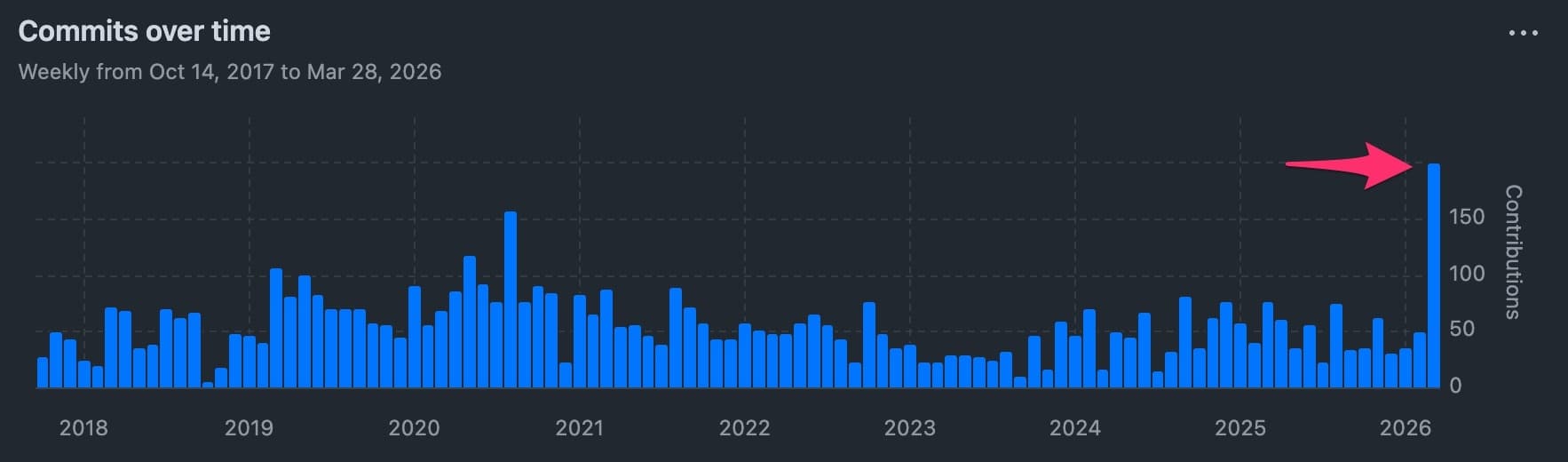

The AI agent's first month was the most active month in the repository's 8+ year history. A massive velocity increase without adding headcount.

Most Active Month in 8+ Years

The spike at the far right is March 2026: the AI agent's first month. Commit volume exceeded every previous peak in the repository's history, dating back to 2017.

The agent started contributing on day one and ramped to full velocity within the first week, sustaining ~16 merged PRs per week for the rest of the month.

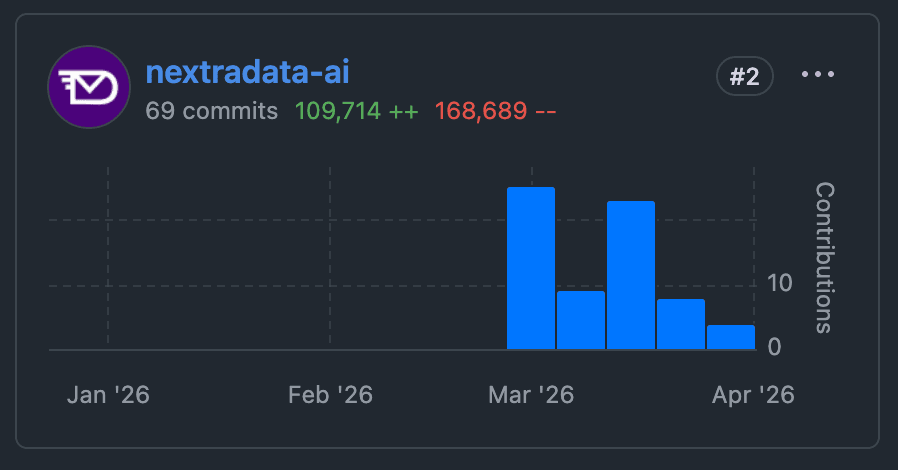

#2 Contributor in a 90-Day Window

69 commits with 109,714 additions and 168,689 deletions. Ranked #2 across the entire team in a 90-day window despite only being active for 30 of those days.

100% Component Test Coverage

The AI agent modernized the entire front-end testing infrastructure in under three weeks, achieving 100% component coverage across all front-end applications.

This doesn't just improve code quality. It lets the AI verify its own changes visually before every PR, reducing review burden on the human team and catching issues before they reach code review.

Equivalent Engineering Output

A senior front-end developer with equivalent output costs $12,000 to $18,000 per month fully loaded, with 1 to 3 months of onboarding before reaching full productivity. The AI agent required hands-on training and codebase preparation, but delivered meaningful output within the first week.

Foundation First

Month one was primarily an investment month: training the agent, modernizing builds and tests, and enabling self-QA. That foundation is now in place.

Compounding Returns

Starting month two, the majority of the agent's output shifts to features, fixes, and product improvements. The testing infrastructure reduces risk on every future change for the entire team.

Additional Savings

Infrastructure improvements eliminated recurring subscription costs and reduced build times. The 59,000-line codebase reduction cuts CI costs and cognitive load for the entire team.

What could an Internal AI do for your engineering team?

Pull requests, testing infrastructure, code consolidation, and issue management. One AI agent delivering senior-level engineering output at a fraction of the cost.

Have a question? Ask away.

Our AI assistant is here to help. Try it out right here.